Writing Repeat, Writing Repeat, …

For the past several years, I’ve supplemented the usual, high-stake writing assignments (papers over 1000 words) with short reflection posts to the course LMS. These essays are limited to 250-300 words and I grade them on a 5-point scale. Depending on the course, I might assign anywhere between 8-12 posts. They are quick to read, easy to grade, and valuable to enhancing class discussions. Students score samples at the beginning and mid-point of the semester to calibrate themselves to the rubrics and to establish a standard for quality work.

My intention with these assignments is to cultivate regular writing (and thus thinking) habits, to prepare students for classroom participation, and to give students many opportunities to practice putting arguments forward and supporting them with class resources. With the brief post I make to the entire class that summarizes my feedback and offers suggestions for improvement, some students are able to make significant improvements in mechanics and style.

The majority of students, however, find a formula that earns them a ‘4’ on a continual basis. Part of this trend stems from the bell-curve distribution of performance and the limited point values to choose among. However, I am starting to believe that the repetitive nature of the assignment encourages students to settle into a groove (or funk?) that will predict their performance for the majority of the semester. Although the topics for the prompts change from assignment to assignment, the process of writing remains unchanged.

My recent experience in article co-authorship, collaborative peer review, blogging, and the various genres of writing one finds in the life of an academic (formal letters, public presentations, assessment reports, scholarship, and creative work) seems at odds with the repetitive writing format we often expect of our students. We train them to write in the style of our disciplines or to the “standards” based model of expository writing. If writing is not improved in repetitive writing activities of the same type, then perhaps we are not introducing students to enough variety of writing practices to give them the the perspective needed to make notable changes.

I plan to diversify the short writing assignments in the future, although I will still use a simple, 5-point rating system. In addition to posts to a discussion forum, I’m considering a class blog project, co-authored papers, and creative/fun fictional writing, among others, to stretch the students’ exposure to various styles and formats. The old adage that one improves by repeating a task over and over again is often challenged when a variety of complementary skills is lacking. This is why athletes cross train and why our students learn to think analytically, quantitatively, and critically in a diverse, core curriculum.

I’m curious what types of short writing assignments others use.

Teach and Learn Carnaval with Nearpod

Teachers have all the fun when it comes to new educational resources. Not only do we play with the latest toys at conferences, workshops, and meetings, but we get the “instructor version” with bells and whistles that students are not privy to knowing: assessment data, practice quiz questions, presentational aids, and suggested exercises. These bonuses, however, usually contain resources that could enhance learning if given over to students. Their very nature represents the contexts and layers involved in the curriculum and an open engagement with students invites self-guided learning experiences.

Teachers have all the fun when it comes to new educational resources. Not only do we play with the latest toys at conferences, workshops, and meetings, but we get the “instructor version” with bells and whistles that students are not privy to knowing: assessment data, practice quiz questions, presentational aids, and suggested exercises. These bonuses, however, usually contain resources that could enhance learning if given over to students. Their very nature represents the contexts and layers involved in the curriculum and an open engagement with students invites self-guided learning experiences.

The interactive presentational software Nearpod permits this role reversal with a recent update to its software: one mobile app that can run either student or teacher versions. While this simplification offers convenience, it is serendipitous for the pedagogue with a class-flipping slant.

Teams of students responsible for presenting Robert Schumann’s Carnaval, Op. 9 will learn more from the experience using Nearpod than PowerPoint or Keynote when they engage with the interactive slides. Some of those features provided by the Nearpod software include polls, quizzes, and drawing exercises. The important pieces of the puzzle involve an intentional and thoughtful planning stage as well as an assessment and reflection stage. Students need to take ownership of important themes and concepts from the lesson and evaluate their role in bringing their classmates to them.

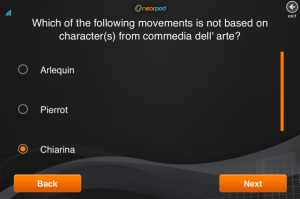

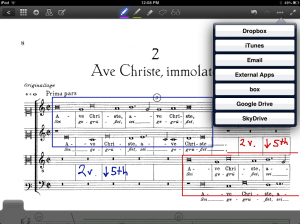

Here are two examples of the drawing interactive slide from a Nearpod presentation on Carnaval:

The poll slides function very similarly to the clicker poll technology and provide immediate material for in-class discussion.

Finally, short quizzes scattered throughout presentations hold students responsible for assessment.

While these tools were originally intended for teachers to employ in lectures, the easy access for students permits them to reverse the classroom and think about the important contexts of the course beyond the required content. It’s ok to put students in the driver’s seat from time to time as long as you know when to take the keys back.

Annotating Josquin with Goodnotes

There are several annotation apps available for iPad, including iAnnotate and Penultimate, but Goodnotes has been my favorite so far for the music classroom, particularly for score analysis. The functionality of Goodnotes across multiple platforms and the ease with which scores and annotations are imported and exported provides ample opportunities for making analysis more interactive in the flipped environment. For students and classrooms with easy access to mobile technology, Goodnotes is a low-cost solution to expanding textbook resources quickly and efficiently.

There are several annotation apps available for iPad, including iAnnotate and Penultimate, but Goodnotes has been my favorite so far for the music classroom, particularly for score analysis. The functionality of Goodnotes across multiple platforms and the ease with which scores and annotations are imported and exported provides ample opportunities for making analysis more interactive in the flipped environment. For students and classrooms with easy access to mobile technology, Goodnotes is a low-cost solution to expanding textbook resources quickly and efficiently.

I often introduce peer analysis activities to encourage students to think beyond the isolated examples of the course anthology. Most music history textbooks, for example, include Josquin’s motet “Ave Maria…virgo serena” to illustrate the new compositional techniques of the Franco-Flemish school circa 1500 CE, namely those described by Pietro Aron in Toscanello in musica (Venice, 1523/rev. 1539). The most striking of these features include the pervasive “points of imitation,” paired voices, and homophonic textures, which reflects a sensitivity to text setting achieved by composing all voices at once (vertically) instead of in linear stages. Simulating the stages of composition and text setting with an activity facilitated by Goodnotes helps students solidify concepts from the book and anthology and reflect on the conditions for composition, performance, and dissemination.

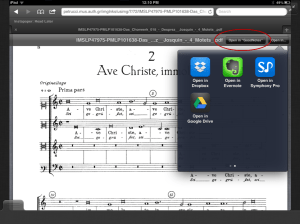

After discussing “Ave Maria virgo serena,” teams of students are assigned a different motet by Josquin to analyze and present to the class. Goodnotes facilitates teamwork outside of class by its compatibility with a variety of applications, Dropbox, Evernote, Google Drive, to name a few. Individual students can work with a specific color to represent their contribution with the file-sharing capabilities of the external programs.

One advantage of Goodnotes is its compatibility with Safari on the iPad, which permits students to seek and acquire examples using their own judgment rather than relying of the instructor to provide. Note in the second screen shot that the Safari app provides a box for opening .PDF files from web resources directly into Goodnotes, like the International Music Score Library Project.

The Goodnotes workstation will assist student learning outside of the classroom, offering the autonomy of choosing a variety of annotation styles and of managing notes in folders and through file-sharing programs. These activities solidify important concepts from the textbook by engaging students or teams of students in the steps of acquiring, annotating, and discussing musical form, style, and function in the context of their readings.

Teacher Evaluations

Lately I’ve been thinking about assessment and how good teaching is measured in higher education. Primary and secondary schools now rely heavily on student success with standardized tests and teacher success in implementing prescribed instruction. While this system is designed to evaluate good teaching by the impact on student learning, the assessment measures would not be appropriate for higher education, which shifts from competency to high order skill objectives like critical thinking and global awareness.

Ever since I entered higher education 15 years ago, first as a student, and now as a professor, I’ve seen teachers evaluated through three main measures: teaching demonstrations, classroom evaluations, and student evaluations. All three of these components scrutinize the teacher, rather than the learner because measuring student learning in higher education is much more subjective, dependent on institutional make up and mission, save some attempts at gathering quantitative data. How can measurements of good teaching be tied to significant data on student learning in higher education?

Teaching demonstrations are the first assessment of collegiate teachers, during the interview process for new positions. The weight placed on the demonstration will vary depending on the type of institution–R1 vs. SLAC–and perhaps to some extent on the discipline (i.e., humanities, STEM, professional degree programs, etc.). Teaching demonstrations are inherently focused on the teacher, even though teaching implies that there is also a learner. Search committees are looking for a fit, someone that brings a breath of fresh air or conforms to departmental culture. Teachers interviewing for positions are measured on performance, not impact.

Classroom evaluations are completed by colleagues and administrators for use in a professor’s promotion and tenure dossier. Like teaching demonstrations, the focus of a classroom evaluation is on the teacher: was the instructor prepared? does the instructor demonstrate competency with topic? did the instructor begin and end class on time? Even questions more sensitive to student learning still focus on the teacher: did the instructor make effective use of technology and media? did the instructor answer student questions clearly and effectively? did the instructor engage all students in the class?

Student evaluations cover many of the same questions as classroom evaluations. And even if a focus on teacher performance is still present, there is also the new angle of student satisfaction. While a liberal education aims to empower students to be critical and reflective, the intent behind students evaluations is not always matched by their use. Some institutions try to mediate the schism by adding questions that invite students to reflect on their performance. But in many cases those questions are absent or not effectively integrated with the assessment of teaching and learning.

So why is teaching evaluated with little consideration for learning? One of the great rewards of being a college professor is having some autonomy over curriculum and instruction. Any measure to assess student learning through standardized testing and an adherence to prepackaged courses would adversely threaten the whole notion of a liberal education. But is there a more effective way to measure good teachers in higher education that places as much emphasis on student learning as teacher performance? I’d be interested in hearing how other institutions have handled evaluations.

Planned and Surprise Debates

Debate is an effective pedagogical tool for engaging students in the classroom and guiding them through the pros and cons of complex issues. In my music classes, I tend to initiate two types of debates, planned and surprise. Both require active participation of all students, but exercise different skill sets.

Planned debates require students to conduct independent research and develop cogent talking points with teamwork and careful preparation. I announce a topic from one of the main themes in the textbook in advance and assign oppositional sides and supporters. Each student is given a worksheet to complete as a prerequisite for the debate. Students work together with colleagues through Moodle discussion forums and chats split into oppositional sides outside of class and a debate moderator I assign ensures that each student participates. While the actual debate itself can be lively and enriching, the gem of the activity lies in what students accomplish prior to the debate. Planned debates demonstrate student success in speaking, research, collaboration, and argument; however, the skills underutilized include listening, quick-thinking, and adaptation, all targeted by the surprise debate.

Surprise Debates are as spontaneous for the professor as they are for students. Whereas planned debates benefit from predetermined reference to specific topics from the textbook, surprise debates are effective in intervening when the primary course objectives are under scrutiny. I have initiated surprise debates when discussions are lackluster or when students are resistant to learning. When needed, I ask students to stand and without hesitation move to oppositional sides at the edges of the classroom. An object representing a speaker’s staff is passed among students who are immediately responsible for addressing the primary issue; students can change sides at any time. I observe silently and tap students who’ve just made profound statements to join me in the middle of the room so that all students are eventually pressed to speak. While much of the learning in planned debates is front loaded, the most beneficial aspect of surprise debates is the follow up discussion. Although I have not tried it, Twitter feeds and periodic clicker polls projected on the screen could assist students in monitoring changes in opinion and the direction of the discussion; both will be helpful in the period of reflection.

To maximize the potential gain of debates in music courses, it is important to consider targeted learning outcomes. Often music courses for undergraduates have specific content and perhaps one or more general education (liberal arts) objectives. While these should be at the forefront when engaging and assessing both planned and surprise debates, one should not forget the broader goals of higher education and the variety of transferable skill sets that debates require: critical thinking, adaptability, research, and public speaking, to name a few. As Snider and Schnurer have effectively presented, exercising these skills through debate across the Freshmen and Sophomore curricula will help prepare students for upper-level courses, graduate school, and future careers.

Too Many Graded Assignments

Over the past few years I’ve added more and more graded activities to my classes than ever before. In a 2006 section of early music history, there were 8 total graded items on the syllabus (participation, one presentation, two quizzes, two short papers, a midterm exam, and a final exam; the final exam was worth 40% of the final grade). My 2011 section of early music history had 24 graded items (participation, 12 reflections posted to the online discussion forum, 4 quizzes, 2 tests, 2 short papers, a presentation, a midterm exam, and a final exam; the final exam was worth 20% of the final grade). My recent music theory classes had daily assignments and sight singing recordings, my music history classes had regular discussion forum postings and listening quizzes, and my world music classes had frequent vocabulary and map quizzes. My intention to increase the number of graded activities during the course of the semester was understandable: students were engaged with the material on a daily basis; I could monitor whether students were keeping up with the content; and I could assure the grade-obsessed students that their poor performance on one quiz would have minimal impact on their final average.

In light of some recent findings on student learning, such as the Collegiate Learning Assessment, I’ve becoming more critical of this trend. Grades, which we need to remember from time to time, are a score of performance and not always an adequate indicator of learning and the lasting impact of a course. If students can amass 60% of their final grade from low impact/low challenge activities that can be completed the night before, will they be prepared for sustained projects? How can we encourage students to conduct self-guided, regular learning habits when we prompt them with dozens of assignments? Will students be successful after graduation with few high-stake assessment measures?

One approach may be to require regular learning activities, but grade them only sporadically. I’ve applied this approach through surprise (i.e. “pop”) quizzes that permit notes (but not textbooks) or through written reflections, so that students are encouraged to read chapters regularly. Imagine an online discussion forum or quiz for each class meeting that are graded on a few random days during the semester.

Another approach is to tie a variety of learning activities toward one big “performance” in a flipped classroom. The Reacting to the Past (RTTP) gaming model inspires students to self-guide themselves through reading, writing, and problem solving exercises outside of class in order to “win.” Student performance in the classroom is assessed, but the variety of learning measures leading up to the performance are not graded.

A third approach that comes to mind is what I call “participatory homework” in my music theory classes. In addition to a handful of homework assignments that students submit in Finale, daily exercises from the workbook or Moodle are tied to attendance. Students are present in class if they have completed the preliminary work.

A gradual shift toward numerous graded assignments resembles trends in K-12 education, easing the transition to a collegiate learning environment. The intent is good; an aggregate of numerous, low-stake assessments will help more students receive passing grades. But will this lead to success in the future? Life and a competitive global marketplace will have numerous high-stake challenges. Will higher passing and graduation rates attest to the impact of an institution on student learning and post-graduation success? I suppose that depends on who answers.

Targeting Technology Toward Pedagogy

In recent years as I’ve gradually begun integrating technology into my teaching in and out of the classroom, I’ve guided my pursuits by the following phrase: “allow technology to enhance, not dictate, your pedagogy.” While the message may seem naive and a bit too simplistic for the complexities of instruction in 21st-century higher education, I remain confident that its guidance has served me well. I’ve witnessed the rise and fall of the “next big thing” and dodged a slew of aggressive advertisements by companies with devices that are already obsolete. Student engagement with technology does not always translate to higher scores, skill sets, and understanding.

Last week I had the privilege of attending my first “un-conference,” Flip Camp Music Theory 2013, at Charleston Southern University, which embraced the possibilities of technology wholeheartedly. Conference organizers Kris Shaffer, Bryn Hughes, and Phil Duker have pioneered the application of social media, just-in-time teaching, and screencasting, among other techniques, to the music theory and musicianship classrooms. As a musicologist straying from my usual conference fare, I was immediately exposed by my lack of an aluminum Macbook Pro. But after a quick Twitter tutorial, I was ready to go!

What really impressed me by this conference was that technology was targeted toward a primary pedagogical method, the flipped classroom. The show-and-tell and open discussion forum continually addressed the possibilities that arise when students take responsibility for the content out of the classroom. Technology did not take the spotlight away from the professor; the flipped environment did so that students could apply what they learned out of class to activities in the classroom instead of sitting passively in a lecture.

Despite my heavy adoption of iPads to classroom activities, I remain relatively low tech: I do not correspond with my students via Twitter or Facebook; I still administer Bluebook exams; and I write my first drafts by pencil. However, I am encouraged to continue exploring new platforms of instruction when I see colleagues directing new technological possibilities toward specific learning outcomes. Leaving the un-conference, I was already brainstorming how I could apply what I learned to enhancing my own pedagogical methods. I shall share soon enough!